Following Tesla’s lead, another automotive company has introduced a humanoid robot.

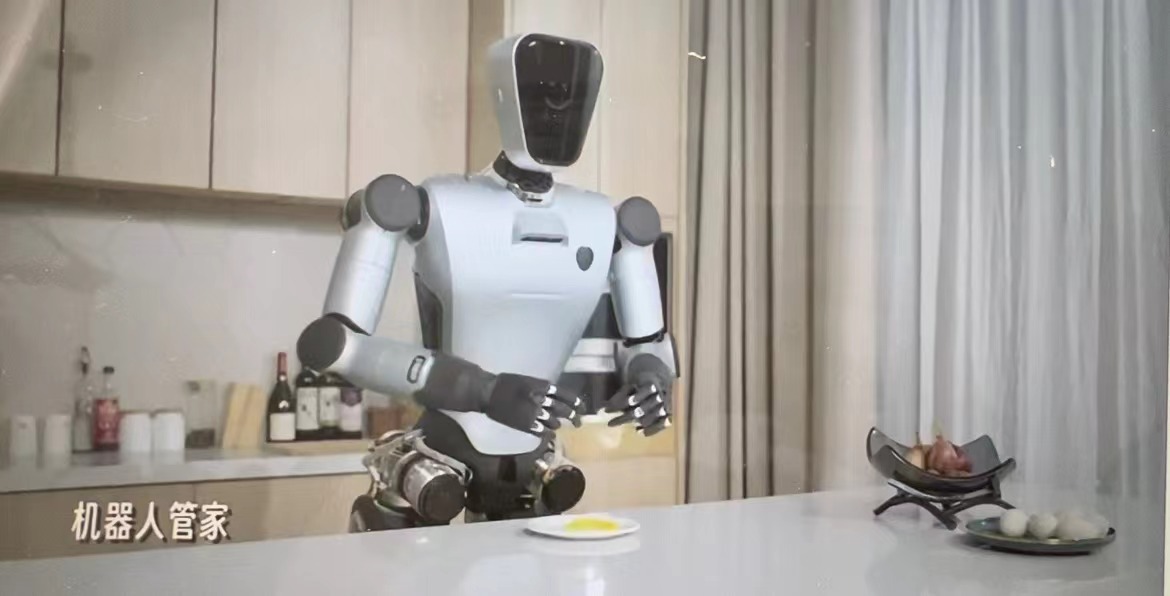

During Xpeng Motors’ Tech Day 2023 on October 24, the Chinese automaker publicly unveiled PX5, its self-developed humanoid robot, for the first time.

Measuring around 1.5 meters in height and sporting a silver-white color, PX5 seemingly bore some semblance to the Japanese anime character Astro Boy.

Resistant to shock, the PX5 humanoid robot can withstand impact and keep steady even when kicked. It can also maneuver on grass and rocky terrain, play football, ride Xiaomi’s self-balancing scooter, and more.

The PX5 humanoid robot has its roots in four-legged robots. As humanoid robots become increasingly popular, more automotive companies are entering this field.

Car manufacturers have a natural advantage in creating humanoid robots—they exhibit a greater propensity to pioneer new technology and possess the means to test the effectiveness of humanoid robots. Moreover, as manufacturing labor costs rise, using machines in lieu of humans has the potential to improve the cost-effectiveness of their operations.

According to He Xiaopeng, chairman of Xpeng Motors, its humanoid robot has achieved stable walking capabilities after five months of R&D. The PX5 can perform various bipedal walking maneuvers, including straight knee walking and long strides, regardless of terrain.

While the PX5 model is currently 1.5 meters tall, He expects subsequent iterations to be larger in size. A progression in size will enable the robot to take longer strides, with the eventual goal of walking 10 kilometers while bearing load and up to 100 kilometers without stumbling.

Research is also underway at Xpeng to develop new components that can improve the performance of its humanoid robots while reducing costs. For example, the company has developed high-performance joints to achieve stable walking abilities, enabling, the PX5 to walk indoors and outdoors for over two hours and overcome obstacles.

Dexterity is another technical challenge that Xpeng is actively addressing. While other companies are cautious about developing robotic hands and prefer to wait for suitable suppliers, Xpeng has developed a series of robotic hands and mechanical arms. The robotic hand has 11 degrees of freedom, with two fingers weighing only 430 grams but capable of gripping up to 1 kilogram of load. The mechanical arm has 7 degrees of freedom, a positioning accuracy of 0.05 millimeters, a maximum load of 3 kilograms, and weighs 5 kilograms.

Using the robotic hand and mechanical arm in tandem, humanoid robots can stably hold a screwdriver and perform tasks like lifting boxes, grasping pens, and pouring water into cups. Notably, these actions typically require high levels of force control, perception, and flexibility, as well as end-effector tactile capability.

According to He, Xpeng is aiming to introduce its PX5 robots to factories and stores by next year’s Tech Day event, utilizing them for tasks such as factory patrolling and in-store product sales.

Nonetheless, it’s worth noting that a consensus has yet to be established on the practical application scenarios for humanoid robots.

Enabling communication between bipedal humanoid robots and humans in stores is unlikely to be a challenging process as large models can now be integrated to achieve these basic capabilities. Instead, the real challenge is to use them in factory scenarios.

Closed factory settings are conducive to implementation, but there are considerations regarding the type of work that humanoid robots can realistically replace, and whether general-purpose or specialized robots will be more cost-effective for specific scenarios.

What can be certain, however, is that with an influx of significant funding, the development and iteration speed of humanoid robots will accelerate.

He Xiaopeng’s vision for Xpeng Motors

According to He, the future of automotive companies will involve the combination of artificial intelligence-powered vehicles and robots.

The first phase of vehicle intelligence will likely integrate high-speed navigation-assisted driving and automatic parking, while the second phase is predicted to encompass urban intelligent driving features.

Xpeng is accelerating its plan for advanced driver assistance systems (ADAS). It has introduced XNGP, where NGP represents “navigation guided pilot,” in five cities including Beijing, Shanghai, Guangzhou, Shenzhen, and Foshan. The company plans to expand to 20 additional mainland Chinese cities before extending its coverage to a total of 50 cities by December this year.

According to He, while the support of legacy architecture, which is based on high-precision maps, helped Xpeng achieve a breakthrough in the first five cities, the terrain complexity and up-to-dateness of high-precision maps have become technological bottlenecks. This drove Xpeng to develop XBrain, its new architecture to support intelligent driving.

This new “perception architecture with spatiotemporal understanding” features national coverage encompassing 73% of the country’s road network, accelerating Xpeng’s expansion at ostensibly 20 times the usual speed and at 10% of the original cost.

XBrain consists of modules such as deep visual neural network XNet 2.0 and neural network-based control and planning module XPlanner. The XNet 2.0 architecture combines dynamic networks, static networks, and an exclusively visual occupancy network, unifying dynamic bird’s eye view (BEV), static BEV, and occupancy networks.

XNet 2.0 utilizes large models to “comprehend” time and space, effectively deciphering information like the right junctures to enter certain lanes and the directions of tidal lanes at different times, thus improving the safety of Xpeng’s ADAS by aiding understanding of the traffic characteristics of different cities.

Equipped with strong cognitive capabilities, XNet 2.0 can also handle issues like obstruction, unclear visibility, shading, and unclear lighting, to improve the quality and data type of perception information, reinforcing the obstacle avoidance capabilities of XNGP.

To improve ADAS capabilities requires efficient data collection, model training, and deployment. Scene data is essential for training autonomous driving algorithms, but companies often struggle to collect all the necessary data. Xpeng has developed the capability to generate data of extreme scenarios using AI, providing model-generated data that can be used for machine learning in autonomous driving.

According to He, over 80% of Xpeng’s autonomous driving challenges are solved during the simulation phase, and the company’s simulation efficiency has increased by 150% in 2023.

In addition to enhancing self-driving capabilities, Xpeng also uses large models and generative AI for other functions.

According to Li Liyun, head of autonomous driving at Xpeng, the company has applied generative AI applications in various R&D areas, increasing coding efficiency by 15%. In the design domain, the use of generative AI has reduced the time required to move from design sketches to actual vehicle effects with the same design quality, from an average of 23 days to 6 days.

Xpeng has also developed X GPT’s Lingxi large model and integrated it into its intelligent cockpit voice system to enhance voice interaction capabilities.

As AI and large models continue to redefine cars, their integration offers opportunities to improve the efficiency of vehicles. Xpeng provides a case for the automotive industry to implement large models on the ground.

KrASIA Connection features translated and adapted content that was originally published by 36Kr. This article was written by Yang Xiao for 36Kr.